Artificial intelligence is showing up in all corners of the marketplace to replace time-consuming, error-prone manual tasks with automated efficiency. It powers the chatbots that we encounter when seeking customer service, enables financial transactions, audits tax returns, screens job applicants, and even alerts physicians to health risks they may have overlooked.

While there is no doubt that AI technology will soon be embedded into nearly everything we do, there is still a fair amount of concern about the technology in the minds of end user consumers. With fears that range from the robot rebellion scene in Terminator to simple concerns about data privacy, many consumers have approached AI with a dose of skepticism.

That’s why it was such a breakthrough when a

Those kinds of results suggest that AI is more than just a convenience. The technology is opening up new segments of the market that didn’t exist before by redefining conventional notions about risk. And, in doing so, it is democratizing lending, rather than making it more restrictive.

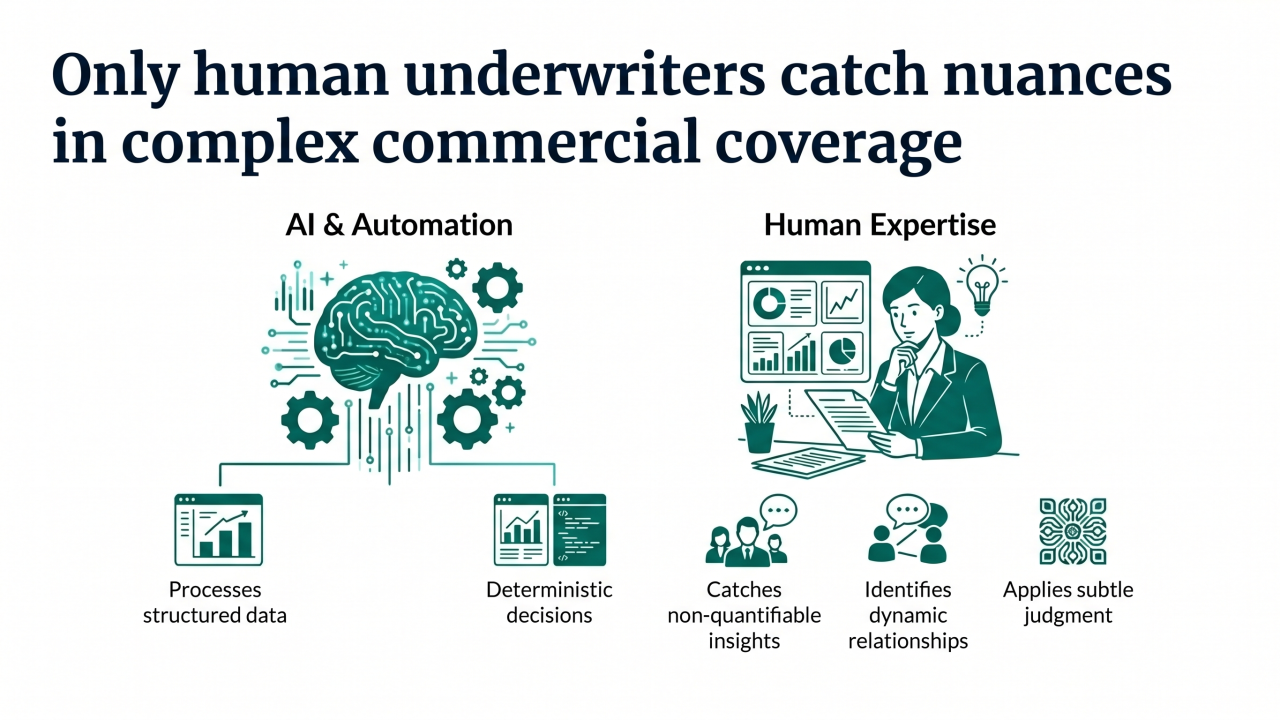

With AI generating profound improvements to loan underwriting, is it only a matter of time before similar capabilities are introduced to P&C underwriting? While the business case is undeniable, P&C insurers will still need to address the issue of public perception of AI before they can move headlong into algorithmic underwriting.

Based on the voice of the customer research we’ve been conducting at J.D. Power, there is a clear path ahead for insurers that want to transition into AI-enabled underwriting, but there are also a few pitfalls they need to avoid.

One of those initial hurdles is the complicated issue of data privacy. According to our research, a full 80% of financial institutions’ customers are confident that their data is secure. However, when we look at automobiles and consumer willingness to share driving information, 87% are somewhat or very concerned about security. At least for now, consumers put their driving data in a different category than their financial data when it comes to perceptions about privacy and security.

Adding to the complexity, there are stark differences in consumer willingness to share driving data based on age. Among members of Generation Z, for example, 83% of consumers “probably” or “definitely” would share their driving data. For Generation X, that number falls to 63%.

It is also worth noting that those who are willing to share their data are not willing to share for free—they expect an exchange of value in the form of discounts or additional services.

Consumers recognize that vehicle information, driving behavior and owner information are relevant items an insurance company may need to provide a discount, but they clearly are not fully comfortable (yet) with data security. Further, they aren’t interested in sharing all their data—they would prefer to share specific data that can be traced to the discounts or services received. What these potential conflicts likely indicate is that consumers need time to get comfortable with a technology and the value it delivers before widespread adoption is possible.

For evidence of a model that currently works well in this regard, consider the widespread uptake of navigation apps, which 84% of consumers already using this technology on their smartphones. The key takeaway here is that AI applications which provide clear value are likely to be adopted.

Secondly, we explore consumer attitudes towards algorithms. Here we find some revealing views that may appear contradictory given the LendingClub results observed above. A recent

We take away a few key lessons from these results. First, consumers are not inherently against sharing data and subjecting it to complex algorithms to generate better outcomes. They are however, skeptical and want to be confident in the whole process. Second, they want a voice in what data is shared and how it is used to ensure transparency and explainability of outcomes.

While it may be possible to simply demonstrate the results, adoption rates may be increased by delivering broad awareness of the inputs, outputs and how they are derived. Third, value needs to be evident from the start. ‘Give me your data and we’ll see what we can do’ isn’t likely to generate trust. Rather a concerted effort to articulate the value offered, highlight the factors that data sharing will impact and then explain algorithm results in the context of ‘me’ v. ‘others’ will drive acceptance. Finally, consumers seek better outcomes. Those companies that provide them in the way consumers want are likely to win the battle of attraction and retention in the low growth, highly competitive personal lines insurance market.

We believe the future potential of advanced technologies to deliver better outcomes is high in the personal lines insurance market. By listening to the voice of the customer and introducing these new technologies with care, carriers can maximize adoption rates to better recover their financial investments.