Can the insurance industry learn to love big data? Of course, it can—as well as it embraces the potential to pull diamond insights out of mountains of coal. But, as is the case across all industries, insurance organizations have only begun to just scratch the surface of what is possible.

A new white paper

In this paper, the use case is for applying big data to provide for personalized auto insurance premiums, based on data streaming in from telematics, combined with actuarial information. The challenge offered is the ability to offer low premiums, but only to customers who are the least likely to make a claim. On-board sensors “capture driving data, such as routes driven, miles driven, time of day, and braking abruptness. This data is used to assess driver risk; they compare individual driving patterns with other statistical information, such as average miles driven in your state, and peak hours of drivers on the road. Driver risk plus actuarial information is then correlated with policy and profile information to offer a competitive and more profitable rate for the company.”

The paper recommends adoption of the Hadoop big data framework for packaging up the mounds of sensor information, which is then loaded into a master data management framework. In this environment, information is accessible by business intelligence and analytic applications—as well as analysts themselves—across the enterprise.

Of course, Oracle's home turf is traditional relational database management systems, which handle structured data that typically comes out of transactional systems. This isn’t too far from most insurers, who are heavily invested in traditional database systems, and need to put these assets to work within the new big data realm. There's a place for both traditional databases, as well as the new breed of “NoSQL” databases, built to handle unstructured files such as weblogs, documents, videos and graphics.

As a result, the paper notes, new IT strategies are mushrooming, including “brute force assaults on massive information sources, and filtering data through specialized parallel processing and indexing mechanisms. The results are correlated across time and meaning and often merged with traditional corporate data sources.”

The paper outlines big data best practices that will meet the needs of insurance companies seeking to get more value out of the mountains of data now available to them:

1. Align big data with specific business goals: In

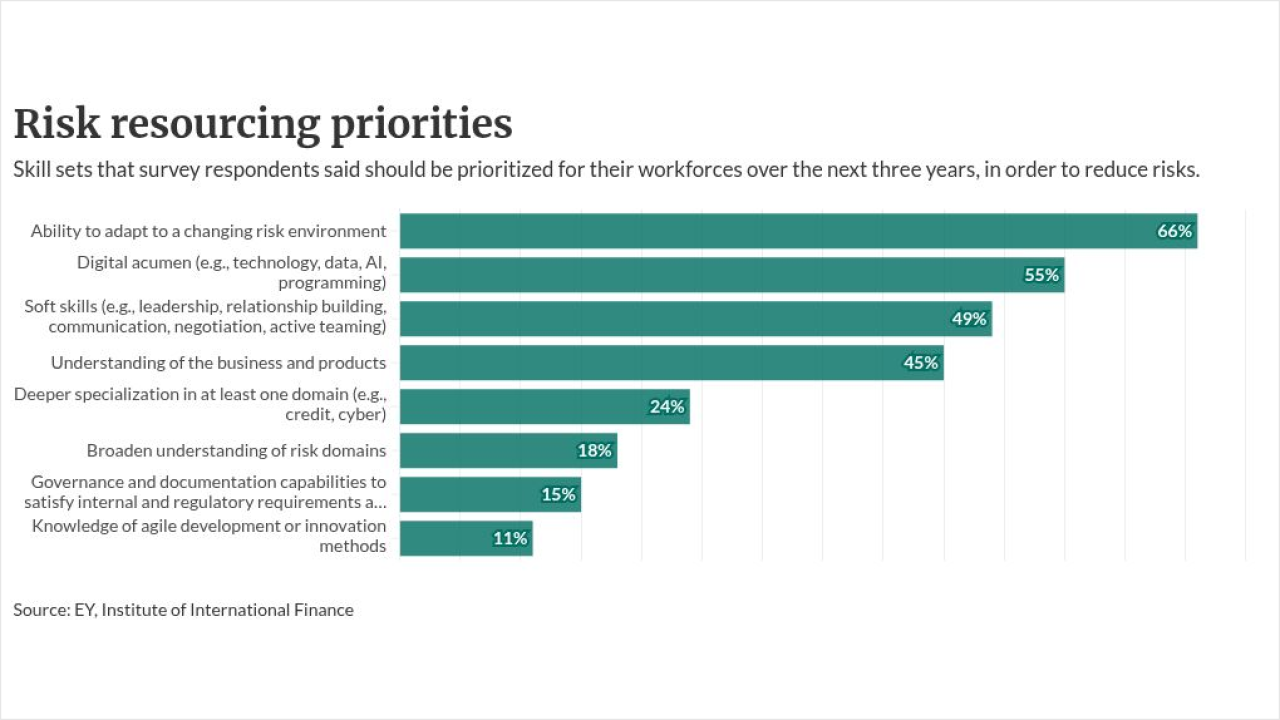

2. Ease skills shortage with standards and governance: Since big data has so much potential, there's a growing shortage of professionals who can manage and mine information. Short of offering huge signing bonuses, the best way to overcome potential skills issues is standardizing big data efforts within an IT governance program.

3. Optimize knowledge transfer with a center of excellence: “Whether big data is a new or expanding investment, the soft and hard costs can be an investment shared across the enterprise.”

4. Top payoff is aligning unstructured with structured data: “By connecting high density big data to the structured data you are already collecting can bring even greater clarity.”

5. Plan your sandbox for performance: Sometimes, it may be difficult to even know what you are looking for. “Management and IT needs to support this 'lack of direction' or 'lack of clear requirement.'

6. Align with the cloud operating model: “Analytical sandboxes should be created on-demand and resource management needs to have a control of the entire data flow, from pre-processing, integration, in-database summarization, post-processing, and analytical modeling. A well planned private and public cloud provisioning and security strategy plays an integral role in supporting these changing requirements”

Joe McKendrick is an author, consultant, blogger and frequent INN contributor specializing in information technology.

Readers are encouraged to respond to Joe using the “Add Your Comments” box below. He can also be reached at

This blog was exclusively written for Insurance Networking News. It may not be reposted or reused without permission from Insurance Networking News.

The opinions of bloggers on