As artificial intelligence plays a greater role throughout the insurance industry, questions arise about AI risk, how it's changing policies and whether to trust the output of an AI-driven decision.

Recent stories cover these themes and explore whether the industry is prepared for the disruption brought on by new technology. Read more below.

Businesses overestimate cyber resilience as AI risk rises, Beazley finds

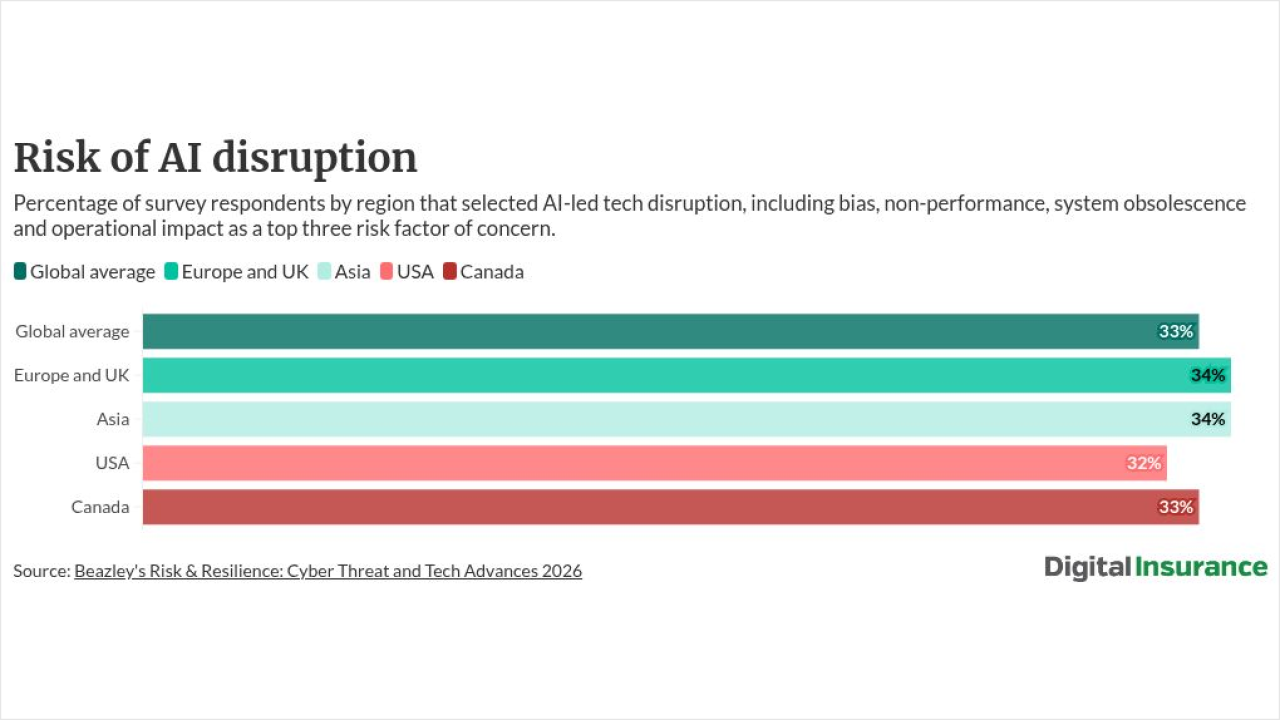

Insurers should stress-test clients' cyber resilience assumptions: Beazley's 2026 Risk & Resilience report finds 83% of U.S. executives believe they could fully recover financially from a cyberattack, even as unpreparedness levels ticked up from 2025. Cyber threats top concerns for 31% of global business leaders, with 33% flagging AI specifically as a primary risk — yet 35% are investing in AI to build resilience and 33% are increasing cybersecurity spending. The report warns that AI introduces compounding exposure across IP, regulatory and operational lines, and that supply-chain interconnectivity is accelerating systemic risk.

Read more:

AV cyber gaps demand layered coverage review

Insurers covering autonomous vehicles face compounding liability exposure where cyber and motor vehicle policies fail to interlock — creating "gray areas" that could leave operators unprotected in a mass incident. Actuaries should deploy scenario and catastrophic modeling to compensate for insufficient real-world data on higher-level AVs, which remain too nascent for statistically reliable risk assessment. When underwriting AV fleets, confirm that commercial business interruption coverage explicitly addresses cyber-triggered vehicle outages — a large-scale attack disabling thousands of vehicles would test most existing policy language. Carriers should also map multi-party liability chains spanning manufacturers, software developers and fleet operators before a claim triggers litigation over fault attribution.

Read more:

Verify data quality before trusting AI claims decisions

Insurers deploying AI for claims and underwriting must audit data quality before granting the tools decision-making authority, according to technology and legal executives. Incomplete, biased or siloed data produces flawed output — eroding staff and consumer trust in ways that stall adoption. Before implementation, confirm data is clean, unbiased and consolidated rather than fragmented across legacy systems. On the back end, mandate human review of all AI-generated decisions; experienced practitioners remain essential for validating output reliability. Confidence gaps can also stem from end-user inexperience or organizational culture, making change management as critical as the technology itself.

Read more:

Annuity growth hinges on data standards, not just AI

Surging annuity demand is exposing a deeper problem than legacy systems: the absence of standardized data exchange across carriers, distributors and intermediaries. Carriers running five to 10 policy administration systems with manual, paper-driven workflows face processing times that stretch weeks or months. The Insured Retirement Institute's carrier-to-carrier initiative is working to align participants on common transaction models that could compress timelines to intraday. Insurers should prioritize SaaS platforms that enforce consistent workflows over custom integrations, which don't scale in a many-to-many market. AI delivers limited ROI without that data foundation — addressing symptoms rather than structural fragmentation.

Read more:

6 steps to personalize life and annuity products at scale

Life and annuity carriers risk falling further behind as customer expectations for real-time, adaptive products outpace legacy system capabilities — and the cost of inaction compounds each quarter. Carriers should pursue six structural upgrades: modular product configuration, standardized enterprise data, API-first third-party integration, real-time underwriting, AI governance frameworks, and redesigned customer lifecycle interactions. Critically, AI layered onto legacy infrastructure stalls at scale; data modernization must come first. Carriers that treat personalization as a structural shift — not a feature enhancement — will gain competitive ground, while those stretching outdated systems face rising operational costs and lost market opportunity.

Read more:

Quality engineering is the new QA for P&C insurers

P&C insurers accelerating core system modernization must retire traditional, late-stage quality assurance in favor of continuous quality engineering — or risk delivery failures as release cycles shorten and integrations multiply. CIOs and CTOs should integrate AI-powered code review at the development stage to catch defects before they reach downstream testing, then layer in automated regression and workflow testing to validate complex business processes end-to-end. Key metrics to track: regression testing time, post-release defect rates, release frequency, and team productivity gains from automation. Insurers that treat quality as a strategic delivery capability — not a checkpoint — will move faster to market while maintaining the system stability regulators require.

Read more:

This roundup was created with AI assistance. A Digital Insurance editor reviewed each item before publication.